•

On shared context

How are we going to manage shared context within a team? Patterns from the past and ideas for the future.

At an individual level, we've learnt how to manage context so that AI systems produce useful results. The quality of the outcome depends directly on the quality of the context I provide.

At the team level, the problem changes. Context is no longer held by one person. It is distributed across roles, conversations, systems, and decisions. For AI to be useful across a group, that context has to be shared.

Capturing and maintaining shared context is difficult. It has been a persistent problem for decades, well before AI.

- The problem is not new

- What shared context actually is

- How do you keep it from rotting?

- The current tools don't solve it

- So what does shared context actually demand

The problem isn't new

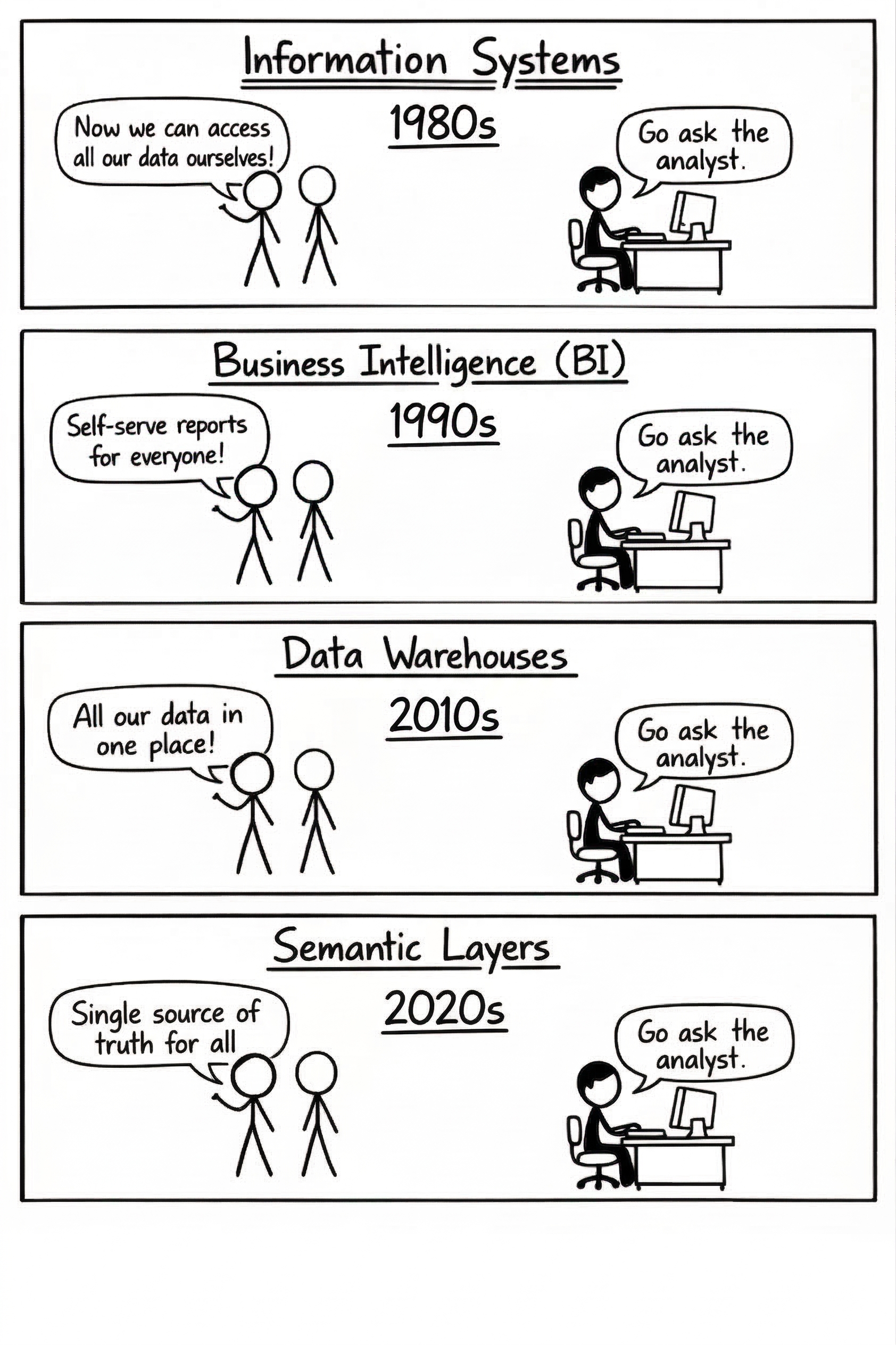

Think about the history of data in organizations. Information systems gave way to BI, which gave way to data warehouses, which gave way to semantic layers. Every generation promised the same thing: self-serve access to institutional knowledge. Every generation delivered the same outcome: go ask the analyst.

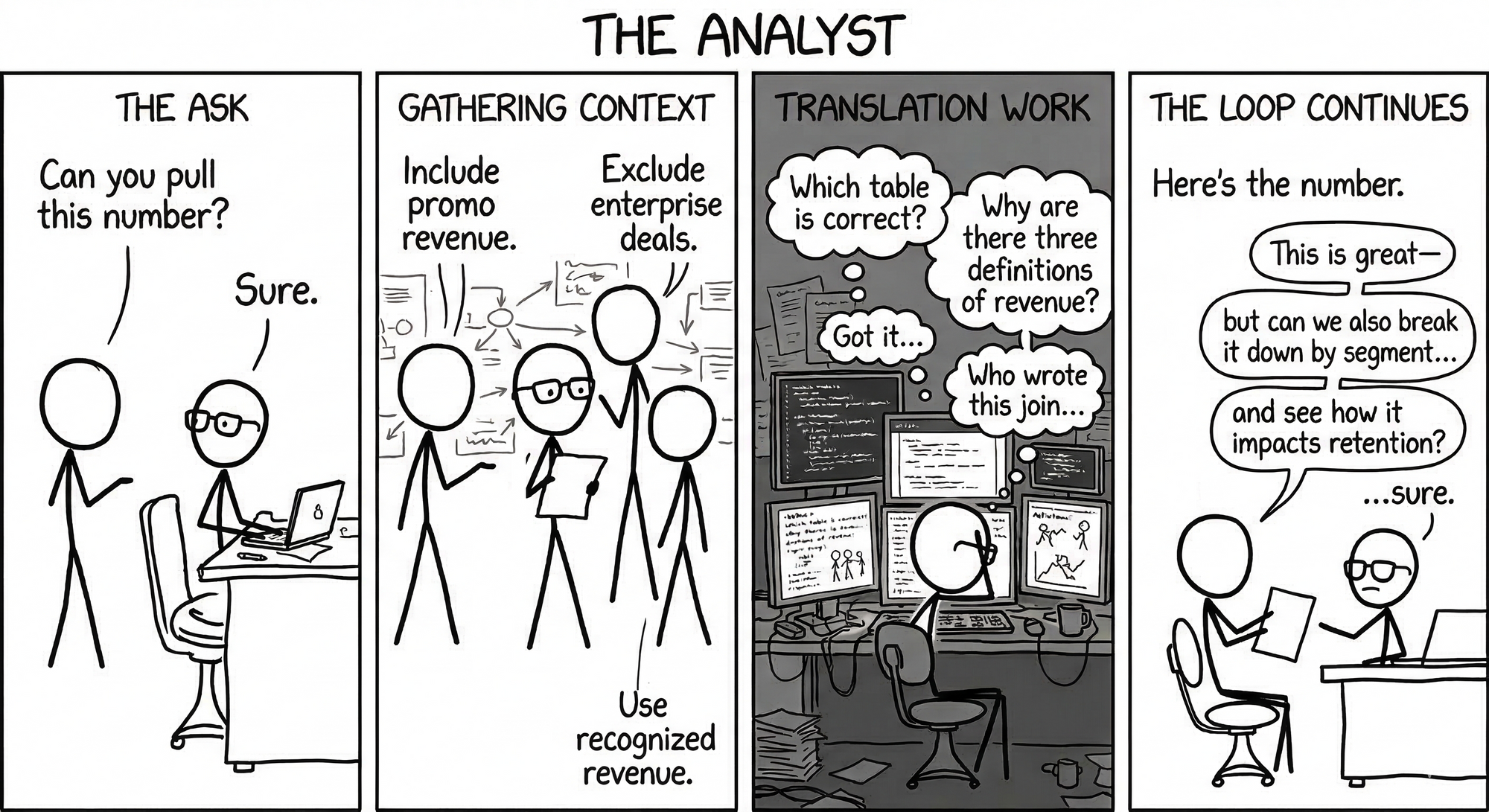

The analyst is the person who captures "context" during a meeting, maintains a piece of it in code, holds a piece of it in their head, and accepts that a piece of it is always a little lost in translation. They exist because there's a persistent skill gap between the people who have business context and the people who can encode that context into technical systems — pipelines, schemas, queries, data models.

That history teaches us something important.

The bottleneck for AI-native teams isn't the AI.

It's the shared context layer that makes AI accurate and useful across a group. And the reason that layer doesn't exist yet is the same reason self-serve analytics never fully arrived: context is expensive to capture, expensive to maintain, and it lives in the gap between people who understand the business and people who understand the systems.

What shared context actually is

Shared context is the institutional knowledge distributed across every person on a team — the stuff that makes the difference between AI that's impressive and AI that's right.

It cannot be scraped. Scrapes can seed context, but it goes stale quickly without an operating model to keep it fresh.

It cannot be written down by any one person or a handful of custodians. Each person can only document part of a team's context. And expecting a few to get to full context from everyone is an operational fantasy.

Shared context is most reliably created through discussion, collaboration and actions. While decisions are being made, tradeoffs are being argued, and corrections are happening in real time. It has to be captured at the moment of shared understanding, not retroactively.

Once captured, how do you keep it from rotting?

This is the harder question. What goes inside that shared context store? What if it's junk? What if someone was role-playing a scenario with the AI and now the system thinks they're a space data center engineer? Who's responsible for quality?

Historically, whenever shared context has been precious enough to protect, we've spun out a dedicated team to manage it. Data teams. Platform teams. Governance teams. Documentation teams. The pattern is always the same: a small group of people becomes the bottleneck, the very thing they were created to eliminate.

The current tools don't solve it

Individual context tools work for individuals. Skills files in a repo. Personal memory. Local prompt libraries. But they're not multiplayer, and they're not accessible to the people who hold the most business context.

Slack is multiplayer and accessible, but it's where shared context goes to die — buried in threads, disconnected from data, with no mechanism to extract signal from noise.

The design space is barren because these tools treat context capture as something bolted on after collaboration happens. But context that requires a separate act to capture doesn't get captured.

So what does shared context actually demand?

Whether you build or buy — these are the principles that govern the problem. Violate them and you'll reproduce the same bottleneck for the fifth consecutive decade.

I. Capture in the flow, not after it

Shared context must be captured at the moment of shared understanding: during the discussion, at the point of decision, in the flow of collaborative action.

The distance between "having a conversation" and "capturing the insight" must be zero. Context that requires a separate act of documentation never get captured. Every system, tool, and process should treat context capture as a byproduct of work, not a chore that follows it.

II. Many authors, no single owner

In any team, one person holds partial context. Shared context cannot be authored by an individual, it must be assembled from many. Any system that depends on a single maintainer, a dedicated documentation team, or a single point of extraction will produce an incomplete and decaying picture.

Every previous attempt to centralize context ownership — information systems teams, BI teams, analytics engineering teams — created the chokepoint it was meant to eliminate. Design for contribution from many, not transcription by one.

III. Living, not scraped

Scraping sources like wikis, Confluence, or Notion produces a snapshot of what was considered true at a point in time. That snapshot does not reflect whether the information is still accurate.

Shared context needs to be continuously created, updated, and validated by the people doing the work. Without active maintenance, its reliability declines quickly. In practice, context that is not regularly revisited is likely to be outdated within weeks rather than years.

IV. Governed like Wikipedia, not like a codebase

Every attempt to govern shared knowledge through formal review creates a bottleneck that kills freshness.

The governance model that works at scale is the one we already know: open contribution, communal correction, continuous grooming. Anyone can add. Anyone can edit. Wrong context gets fixed by the people closest to the knowledge, not by a review board three weeks later. The space-data-center-engineer problem resolves itself the same way Wikipedia handles vandalism — quickly, communally, without a committee.

V. Accessible across the skill gap

The people with the deepest business context rarely have the skills to encode it into technical systems. The people who maintain technical systems rarely have the full business context. This gap has defeated every generation of data tooling. Shared context must be legible and editable by both sides — technical and non-technical, engineer and operator, builder and business user — or it will reproduce the same translator bottleneck that self-serve analytics never overcame. If your system isn't multiplayer across this divide, it isn't shared.

VI. Context flows to the point of action

Shared context that exists in a wiki disconnected from where data lives, where code runs, where decisions execute — that's just nicer documentation. Context must be wired into the operational data stores, pipelines, agents, and workflows where it actually makes AI output accurate. Context that can't reach the point of action is trivia.

Most teams understand this. It's the natural first move: take what you know and push it into the systems that need it. Context to action. This is the direction everyone builds first. From RAG to knowledge graphs to context engineering.

VII. Action flows back to context

This is the direction almost nobody builds. When AI uses your shared context and gets something wrong, that's a signal. When the AI asks a question the context should have answered, that's a gap. When a human corrects an AI output, that correction contains knowledge that should flow back into shared context.

AI has commoditized execution. Individual productivity is exploding, but it hasn’t translated into 10x velocity for the organization.

When execution is on tap, context becomes the bottleneck. Context is the job.

The teams that figure out shared context will break this bottleneck to unlock the full benefits of being AI-native. Build it.